Real-Time Sign Language Interpretation System

Real-Time Sign Language Interpretation System

Project Overview

Real-Time Sign Language Interpretation System

At Prajnja Softech LLP, we are developing a technology-driven initiative with a clear human-centered mission: to enable direct, dignified communication between the deaf and mute community and the hearing world—without reliance on intermediaries. Communication is a fundamental human right, yet millions of sign language users remain dependent on others to convey even basic needs, thoughts, and emotions. Our project addresses this gap through a real-time, AI-powered sign language interpretation system that supports seamless two-way communication—converting sign language into text and voice, and spoken language into text and sign representations. Guided by principles of accessibility, privacy, and ethical AI deployment, our vision is to deliver an inclusive and scalable solution that enables natural, respectful communication in everyday real-world settings.

The Problem

Our Solution

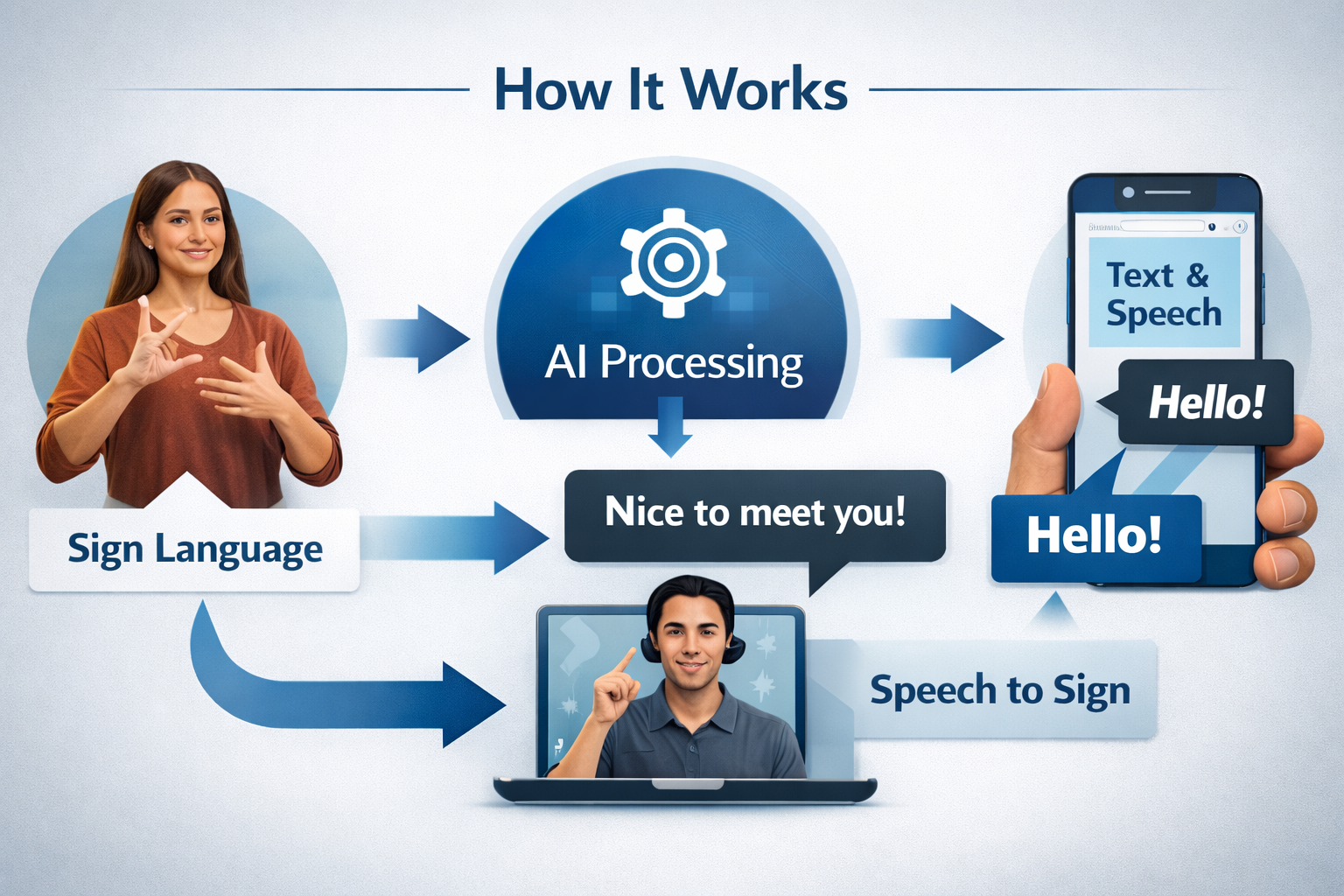

To bridge this gap, we are developing a mobile-first, AI-assisted communication platform that enables direct, two-way interaction between sign language users and non-signers without human interpreters. Using a device camera, the system interprets sign language into text and spoken audio in real time, while spoken language is transcribed and converted into visual or avatar-based sign representations.

The platform is designed with privacy, accessibility, and real-world usability as core principles. Developed in phased stages, it focuses on reliable performance and ethical deployment, aiming to deliver a practical, everyday communication tool rather than a limited prototype.

How the System Works:

The system is developed in phased stages to manage the complexity of real-time sign language interpretation. Initially, the mobile camera captures hand gestures, which are processed by machine learning models to recognize sign patterns and convert them into readable text in real time. The next phase adds text-to-speech synthesis, transforming interpreted text into clear spoken audio for seamless interaction with hearing users.

Subsequent phases enable reverse communication by converting spoken language into text and then into visual or avatar-based sign representations. As the platform evolves, it incorporates facial expressions, body posture, and contextual cues to improve linguistic accuracy and natural conversation. The final phase focuses on real-time optimization, scalability, and efficient on-device and cloud processing to ensure reliable performance at scale.

Technology and Research Foundation

This project operates at the intersection of computer vision, machine learning, and accessibility engineering, addressing the significant technical challenges of real-time sign language interpretation on mobile devices. Achieving reliable performance requires substantial computation, diverse training data, and careful handling of real-world variability.

Accordingly, the initiative is pursued as a research-driven, ethically grounded effort rather than a simplified prototype. It emphasizes continuous improvement, transparent AI practices, and collaboration with academics, accessibility experts, and the Deaf community to deliver a trustworthy, long-term assistive technology with real-world impact.

Impact and Vision

In the near term, the platform empowers Deaf and hard-of-hearing individuals to communicate independently, reducing friction in everyday interactions and strengthening confidence across social, professional, and public settings. Over time, it aims to support more inclusive workplaces, improved healthcare communication, and accessible education through multilingual, globally adaptable deployment.

Ultimately, success is defined not by technical metrics alone, but by the extent to which people feel heard, respected, and included—restoring dignity, confidence, and equal access to communication.

Roadmap

The roadmap begins with a minimum viable product focused on sign language–to–text conversion using a limited vocabulary and controlled testing, prioritizing accuracy and usability. The next phase involves pilot deployments with real users, where feedback and partnerships with NGOs and institutions guide refinement under real-world conditions.

The final phase expands the platform to full bidirectional communication, multilingual support, and scalable architecture. Each stage builds on validated results rather than assumptions, ensuring responsible and sustainable progress toward an inclusive communication solution.

Details & Info

Prajnja Softech LLP is developing a mobile-first, AI-driven sign language interpretation platform to enable direct, dignified communication between the deaf and mute community and the hearing world—without human intermediaries. Grounded in the principle that communication is a fundamental human right, the project addresses exclusion caused by technological barriers rather than lack of ability.

The system supports real-time, two-way communication by translating sign language into text and speech, and converting spoken language back into text and visual sign representations. Developed through a phased, research-driven approach with strong emphasis on privacy, ethical AI, and real-world usability, the initiative aims to reduce social isolation and expand access to education, healthcare, and employment by empowering individuals to communicate independently and confidently.